This week I’m brining you this article from my hotel in Shanghai, I’m in China to present Unix to VMware Migration workshops. Last week I made the treck to Las Vegas like around another 12,000 people for the annual HP Discover US Event (June 11th – 13th). This was my first time to HP Discover and I was very grateful to HP and Ivy Worldwide for making this opportunity possible. HP Discover is not like any other IT event that I’ve been to and HP certainly know how to put on a magnificent show. Almost all of the Sands Expo Center at the Venetian was taken up by the exhibition stands of different HP divisions with the remainder for Sponsors. In terms of number of attendees it’s around half the size of VMworld, but lacks nothing in terms of spectacle. The big themes of the event were aimed around creating a better enterprise and HP’s slogan for the event was “Build a Better Enterprise Together”. So the big themes in the order of my interest were Big Data, Software Defined Networking, Software Defined Storage, Converged Infrastructure and Moonshot. I will briefly cover what I consider the highlights in this article.

Just to give you an appreciation for the size of this event here is a photo that shows a fraction of the show floor at the Sands Expo Center at the Venetian Las Vegas:

Day 1 Event Opening Keynote

Kevin Bacon opened up the event by describing the concept of the 6 degrees of separation and the 6 degrees of Kevin Bacon, which was an internet sensation for some time. He quickly then explained the explosive growth of data (Big Data) and how the world is creating more data every day than was created prior to 2003. My first thought was it must be good to be in the storage business. Here is a photo of Kevin on Stage with some of the data statistics. With the explosive growth of social networking it’s more like 4.7 degrees of separation according to Bacon’s presentation.

Meg Whitman, HP CEO, then took the stage and continued to explain the significance of Big Data and HP’s overall strategies, including sharing a compelling case study from NASCAR. NASCAR are using HP Big Data, Software and Hardware solutions to make NASCAR and even more intense experience and to monitor and adapt their business in real time. Brining the fans closer to the action and taking in feeds from video, voice and social media. Meg also spoke about the transition from the Mainframe world to the client server world and now how the world is changing again in another revolutionary step to a mobile, social and real time business environment. I know from first hand experience how the new paradigm is taking shape. Traditional Mainframe and Unix platforms are being aggressively migrated to industry standard servers running VMware vSphere and applications are being rewritten to adapt to the cloud and mobile era. HP’s argument is that this new era requires a revolution in server infrastructure and this is where their Moonshot Servers come in.

Moonshot servers are ultra compact and need less power than that of a 60w light bulb. HP.com, which receives more than 3million visitors per day is running on 12 Moonshot servers consuming approximately 720W of power. HP believes this will save the Internet due to estimations 10million servers are required in the next few years, data is doubling every six months, and every million servers not only requires a huge investment in datacenters but also requires an entirely new power plant to be built. The Moonshot servers not only consume less power, but they also cost a lot less and consume significantly less space. In the day 2 keynote we heard that currently you can get 450 Moonshot servers per rack, which is set to increase to 1800 servers per rack in the future. The Moonshot servers run one of three different CPU options, ARM, low powered Intel or Nvidia GPU. Future versions will also be suitable for virtualization, which will open up a all new possibilities for hosting and cloud environments to scale dynamically. By my estimations you can already get approx 3072 Virtual Machines with 2 vCPU and 8GB RAM per rack, if not more, if you’re running VMware vSphere on blade infrastructure. So some more number crunching will be required on Moonshot.

So taking a lot of power requirements out of the servers is great, but my overriding question is what about the storage that is needed to power these monster clusters and the huge amount of data growth. Storage has traditionally consumed a low of space and power. The storage side of the equation wasn’t covered during the keynote presentation, and this shouldn’t detract from the overall achievements of the Moonshot platform for the niche applications that it can be used for currently. One thing is for sure the advances in flash based storage will greatly help with power consumption and performance per watt, more on HP Storage later.

HP’s message during the keynote really showed they are trying to be all things to all people (mission impossible?). They seem to be trying to beat the likes of IBM, and to a certain extent Oracle, at their own game. They want to partner with their customers to build a better enterprise together, which is the right approach in my opinion and a good goal. Some of the products and solutions presented come across as little ‘me too’ without real innovation. Only time will tell if they can actually pull off the aim of being the best company to provide a solution to every problem, execution will be key. There is still duplication and lack of integration between solutions and offerings that will need to be sorted out.

Here are a couple of the key images from Meg Whitman’s keynote presentation:

Day 2 Keynote

The day 2 keynote was all about the technology. HP showcased Servers, Storage, Networking, Software and Services. What I found very interesting from the day 2 keynote was how little attention was paid to the traditional Unix systems. However all the attention is going towards what HP term Industry Standard Servers and even their Business Critical / Mission Critical x86, such as the DL980’s. I guess this is acknowledgment of a well established trend away from Unix platforms. One of the coolest products shown during this keynote from a server perspective was a very compact ROBO server termed ‘baby’s first datacenter in a box’. I think this could actually be a hit, I know my kids already have their first datacenters, but the real use case is for remote office / branch office where you don’t want to have a computer cupboard anymore. The small server was very compact and also very quiet. Moonshot and the roadmap of the platform and it’s results were interesting to see from a revolutionary server platform perspective as I mentioned also from the day 1 keynote. The Gen 8 Servers got a good showing and there were some impressive industry leading benchmarks published during the event such as the VMMark results where HP Gen 8 servers took the lead powered by Fusion-io.

I missed the morning Storage Press Conference due to a conflicting appointment but there was plenty of storage as part of the day 2 keynote. The key storage platform announcements from my perspective were the all flash 3Par array, the 7450, and what HP is doing in the software defined storage space. Overall the strategy isn’t quite complete and the vision is good but some components aren’t quite there yet technically. But they will be before too long. The good thing about the 3Par 7450 is that it leverages the same 3Par architecture so can leverage the same management tools and software capabilities as the other arrays. It’s performance from the benchmarks discussed were pretty good – 554K IOPS at 4KB IO Size and 0.7ms latency for random read. This was from a fully populated system. This isn’t quite as fast as some competing storage systems, but you need to consider the platform includes all the other capabilities of 3Par including all the replication support etc. 3Par has good scalability in the platform, includes a lot of features as standard, but still lacks a few high end capabilities that some competitors bring to the table (more on this later). As a comparison I can get 187K random read IOPS at 0.5ms latency from a single VM connected into one of my vSphere hosts from a single Fusion-io ioDrive2 card, but of course this doesn’t have the features of a full 3Par array, such as replication etc. 3Par does seem to be gaining ground though with 1500 new customers and over $1billion in revenue in 2012. Plus they have the switch to 3Par guarantee (provided you’re not using thin provisioning or dedupe already) that you’ll get 2 x the VM density.

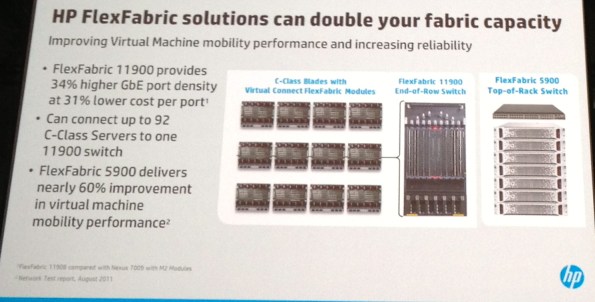

Next up came networking with HP claiming #2 position to Cisco and bigger than the next 5 network vendors combined in terms of revenue . Really the #1 or #2 spot would go to VMware if it were purely on number of ports (if you include physical and virtual ports), but the comparison was based on revenue. I’ve always liked the HP datacenter switches since I first used them more than 10 years ago. They’ve always been easy to manage, very feature rich, and lightning fast. This year they introduced a number of enhancements to flex fabric where a single 11900 switch could support 92 C-Class Servers. HP was also flexing the SDN muscle touting 40 OpenFlow switches and 20M installed ports. I was particularly interested in HP’s announcements around the 5900V virtual switch for VMware vSphere. I thought this would be a serious play against the Cisco Nexus 1000v. Unfortunately all it does is bypass the hypervisor networking stack and implement the VM networking on a physical switch. This not only adds latency to traffic that would otherwise pass between VM’s on the same host, it doesn’t allow the performance of in-hypervisor security modules, and it means you’re locked into the physical HP network hardware stack, which greatly reduces your flexibility. At least with the VMware Distributed Switch or the Cisco Nexus 1000v you can use any hardware switches you like, including HP’s.

HP covered their new converged and open Cloud offering based on OpenStack briefly. But unfortunately they forgot to mention that it’s not even compatible with HP’s other Cloud service, which is offered by HP Enterprise Services, called the HP ES VPC. Compatibility between the two clouds is coming in the future apparently. In the meantime HP ES VPC Cloud runs on VMware vSphere and the new HP Converged Cloud runs on OpenStack. This seems similar to the IBM OpenStack Cloud that was announced recently and adds additional competition to the other offerings in the cloud market.

Big Data was big news at HP Discover and during the day 2 keynote we got to dig a little deeper into HP’s Big Data solution called HAVEn. HAVEn is an acronym that stands for Hadoop Autonomy Vertica Enterprise Security for n applications. It’s a platform that can integrate many different types and streams of data, both structured and unstructured, and analyse them in near real time. It was especially interesting to review the NASCAR case study again to see how they are using real time big data analytics from all sorts of different data feeds, including social networking, to make business decisions and improve their business. The HP Discover event itself was also using HAVEn to measure sentiment and influence and other factors around the event to gain insight into what was going on, which is a great demonstration of the platforms real world capabilities. I’ll show you a photo of the HAVEn implementation for HP Discover below.

The most interesting aspect for me was the way that Autonomy could take unstructured data and make sense of it, giving it context, while Vertica could analyse structured data at lightning speeds. But what sort of data? Well it’s not just limited to social networking and business data but also other relevant and important data. The HAVEn platform can take huge amount of data from different sources and correlate them all. The types of data HP described was machine data, such as sensors, logs and system metrics, business data such as from CRM systems, and human information such as voice feeds and video. Another real life example HP gave during the keynote is how the Venetian|Palazzo Casino Hotel used the HP HAVEn platform to monitor all the gaming systems, Casino gaming floor and identify possible issues in real time, including integration with all the camera systems etc. They gave a demonstration of how HAVEn could be used to solve a system performance issue, but to be honest this demo really missed the mark as it didn’t demonstrate HP’s unique capabilities. VMware vCenter Operations could have done the same thing and a lot more quickly for the use case HP presented. However if they had integrated the demo system with data from the call center voice recordings and online social networking to determine severity and trends before it became a major issue, and then solve the problem, it would have been much more relevant. Given recent media events it was hard to avoid comparing what HAVEn could do to what had come out about PRISM. No comment either way was forthcoming from HP, which is not surprising. The ability to scale your big data systems up and down and make testing them efficiently lends itself to good virtualization use cases. There is a lot of work going into optimizing VMware vSphere environments to run Hadoop and there is no reason why HAVEn couldn’t run just as well in a virtual environment, provided you design it properly and test and verify it to make sure it’s working to your requirements. It will be interesting to see how this technology develops in the future. But the electronic big brother is already watching you. So you can’t assume any privacy for any information you make available online. Just assume that anything you say or do on the Internet could be on the front page of the news or someone’s blog tomorrow.

Here are some of the key slides from the day 2 keynote:

Here is a photo of the HAVEn system that was monitoring HP Discover. You might notice that I made a bit of an impact on day 2. I was the major influencer for pretty much all of day 2.

Coffee Talks

One of the best parts of being invited to be a blogger at HP Discover was the coffee talks that were arranged so that we could meet all the key executives and subject matter experts for the different areas across HP. This allowed us to drill down on key points not covered during the keynotes. Below I’ll give you some very brief highlights from each of the coffee talks.

Big Data and HAVEn (Hadoop, Autonomy, Vertica, Enterprise Security for n applications)

Machine info, business info, human info, correlated and available in one place providing context and support for real time decision making. Examples include determining from voice calls caller sentiment (if they are likely to stop doing business with you) and whether they are lying, allows offers and decisions to be made in real time to prevent lost customers and improve customer experience. Take data from camera feeds to determine in real time if someone is cheating (Casino for example) or if there is a health concern or possible security event, such as a door opened at the wrong time of day. This technology could be described to be similar to a number of scientific discoveries. It has the potential to do immense good but also could be used and abused for the wrong reasons. It also gives hackers a high value target. If you hack the big data systems you potentially have access to every piece of important data all in one place and correlated between many sources. The security and privacy of the data is a great concern, and not just access to the raw data but also the search results across the data. We discussed how HAVEn could be used to make systems more secure by providing context based security such as location data, and detecting duress in someones voice. There is massive data growth being driven by machine data, such as seas of sensors and massive telemetry data, and human information, such as voice and video data.

Mobile Apps

The overall conclusion from the Mobile Apps coffee talk was that if you really want to take advantage of mobile and give a good user experience then you need to completely rewrite your apps and you can’t really leverage your existing investments. I don’t agree with this and I think you should be able to take a hybrid approach that gets even greater value out of your existing apps while making your business much easier and more responsive to your customers using a mobile and social online strategy. HP’s argument was that you mobilise transactions, you don’t mobilise applications.

HP gave an example of Mary K cosmetics where 20% of agents are joining the business because of how easy the mobile app is to use and the support across any device. Having the mobile app has massively boosted Mary K’s business. The App supported 240K contractors. This example was impressive and quite compelling. Really shows the benefits of developing purpose built mobile apps can have a positive impact for the right transactions and interactions.

We did have an interesting discussion on security and the death of the password in favor of multi-factor authentication. There are a number of problems with traditional password security and verification (remembering many different passwords is one such challenge) and I think it is high time it was replaced by multi-factor means of verifying identity. Only time will tell how this plays out. But contextual security will be important also, such as determining your location and authenticity of your identify based on usual location, usual usage patterns, and the usual devices you use in addition to traditional factors for authentication.

Storage

We spent a bit of time going through the 3Par storage system architecture and especially the details of the new 3Par 7450 All Flash Array. I like the way the 3Par architecture is highly parallelised. This makes it great at handling lots of random IO’s, which is very normal for virtualized environments. HP covered the new Peer Persistence feature in the 3Par system, which will allow 3Par to attain the VMware vMSC certification for deployment in stretched cluster environments (similar to what HP P4000 system can do now). HP wasn’t sure how long the VMware vMSC certification process would take, but it is underway. There were a couple of gotchas with this new Peer Persistence feature such as you must implement a uniform storage access configuration and each LUN is active/passive not active/active across sites. The uniform storage access is much more complex to implement and maintain and doubles the number of paths zoned to each host. This effectively halves the maximum number of LUNs you can have configured per host as you need redundant paths to each array at each site. The LUNs being only active for read / write at one site is also not ideal as any VM’s executing on the opposite site will have additional latency for all their storage accesses back to the primary site for the LUN. 3Par Peer Persistence is trying to compete against EMC VPLEX and other vMSC solutions (such as NetApp), but it’s not quite on par yet due to no support for SRM on top of the stretched cluster (unless using hypervisor based replication), and the lack of support for active / active read/write LUNs and non-uniform storage access. All in all though if you had an existing 3Par implementation and wanted to have a stretched cluster between two active datacenters (provided they are within the distance limitations <10ms) then you could now do it. Many 3Par customers I spoke to were very interested in this new enhanced feature, which also includes provision for a witness, they were also very happy with the performance and manageability of their existing 3Par systems. Most 3Par customers like the fact that they can start with an entry level 3Par and grow it as their needs increase while keeping consistency of management platforms.

Networking

There was quite a bit of discussion around network management and monitoring and using OpenFlow combined with Blueprinting to roll out applications on top of virtual networks. The blueprinting capabilities, which basically allow you to deploy an application anywhere and have it’s networking automatically configured, are very compelling. This was however limited to OpenFlow based networks.

I was interested in how the networking hardware was going to be made better to support the new Software Defined Networks, Network Overlays and Network Virtualization technologies. But unfortunately there appears to be nothing in the pipeline to build mechanisms into network hardware to reduce the overheads associated with network layer virtualization or overlay technologies, nor increased MTU sizes, which will increasingly become important as Ethernet reaches large scale 40G and 100G adoption. It appears to be HP’s opinion that =>9K MTU is not required and in fact most people are / should still run 1500. This is in spite of a 10% performance improvement when using Jumbo Frames on 10G links and this performance improvement is only going to increase as the Ethernet bandwidths scale up.

Converged Cloud

HP launched what they call their Converged Cloud, this cloud equals OpenStack. It isn’t currently compatible with HP Enterprise Services VPC cloud offering (note the duplication and lack of integration I mentioned earlier), which is based on vSphere. You can’t migrate between the two different HP clouds, but this will apparently be changing in the future. This appears to be a play in competition to RackSpace, Amazon and VMware vHCS. With VMware vHCS customers can easily move their existing workloads from any VMware environment to a hybrid cloud model, and to any of the many Partner’s VMware based clouds easily, without any modifications. This level of operability isn’t yet available with the OpenStack clouds, but no doubt it will be there in the future. OpenStack right now seems very much like the early days of Linux where there are so many different distributions and choices.

Converged Infrastructure

HP’s converged infrastructure should really be called pre-packaged infrastructure rather than converged as it lacks converged management and many operations still require standalone management tools, doesn’t update as an entire platform as one whole, but can potentially scale and change more easily than other converged platforms. Still this makes it every easy for customers to purchase and deploy HP’s technology. This is really HP’s first step to converged infrastructure and they’re working hard to converge the management and lifecycle processes together. It’s still early days yet but vBlock and newer converged offerings such as Nutanix have got the jump on HP and still lead while HP catches up. Some of the people at the conference were liking the HP converged infrastructure to what VMware provides in the vCloud Suite currently. A single SKU to purchase but it’s really a number of products that aren’t really integrated. If you’re an existing HP customer the new converged SKU’s will make purchasing HP technology much easier and much quicker to implement as it comes pre-packaged to your specifications from the factory / distribution. Overall this will decrease your time to market and also decrease your TCO. Once they have the management side of things sorted out it’ll break down the IT silos and also decrease further the operational costs of the infrastructure as you’ll be able to manage the complete platform as one.

Here is a photo of the HP Virtual Convered Infrastructure Rack (right). This is a virtual demo controlled by an HP tablet where they can show you everything about the converged infrastructure platform, but without having to carry around a full implementation. This is rather a great bit of kit. The rack on the left is the actual converged platform based on an entry level solution that can then be easily expanded.

Visited Sponsors Stands

I took some time out to visit some of the sponsor stands at the show and I’m really glad I did. There were some excellent sponsors at the event and I learned quite a lot. Here are some of the highlights from the sponsors at HP Discover.

AVT

AVT is a company that provides a solution that allows customers to migrate OpenVMS, VMS Clusters and Alpha systems to VMware on HP systems without code changes. This means that any existing customers that want to retire their old hardware can do it but without the massive costs associated with redeveloping their application. AVT is the only HP supported solution that allows this type of migration. You can run multiple VAX or Alpha systems per host easily. The main advantages are reduced risk of running on old or retired hardware, prolong the value of your VAX and Alpha software, avoiding costly and complex software migration costs. There is also a good potential for performance improvements when going to the latest generation hardware platforms. The company estimates there are still 500k VAX and Alpha systems in existence and that’s not surprising. So if you do happen to have a VAX or Alpha in your datacenter it would pay to get in touch with AVT.

Fusion-io

It was good to see Fusion-io at HP Discover and especially good to learn about their spectacular performance results (as mentioned earlier). HP along with Fusion-io set some world record VMMark Results, and achieved performance for an Oracle workload against an on ION storage system of 2.2million IOPS and 24GB/s. Fusion-io had the performance demo running live on the show floor and I hope they bring this to VMworld this year as well and run a Virtual Machine benchmark against it on the latest VMware release. To reach this spectacular performance HP and Fusion-io used a Gen 8 DL980 with 6 x dual port 16Gb/s Qlogic HBA’s going via a Brocade 6510 16Gb/s FC switch to 3 x Gen 8 DL380 servers each with 2 x dual port Qlogic 16Gb/s HBA’s and 4 x 2.4TB HP IO Accelerators (Fusion-io Cards), 28.8TB in total. This is a surprisingly small configuration for such a massive performance result. This certainly demonstrates not only the potential of the Fusion-io technology but also of the HP Gen 8 servers also.

TIBCO

I had been getting a lot of requests recently from customers that wanted to virtualize varios types of TIBCO workload including DataSynapse Federator and GridServer. I thought the best way to find out what the support situation was would be to ask TIBCO themselves. I went to the stand and they were extremely helpful. Within a few minutes I was connected to the VP of GridServer and he confirmed that indeed large scale deployments of GridServer were supported on VMware vSphere. Here is what they said:

* Yes, you could run 6000 GridServer engines (or any number), each on a virtual machine, provided the underlying physical infrastructure was sufficient. (This is pretty much what TIBCO DSPG QA lab looks like.)

* Yes, you could have 6000 separate grids, each on its own virtual infrastructure, again provided the underlying physical infrastructure was sufficient.

* You should not attempt to run 600 or 6000 anythings on a single VM.

This last comment is of course common sense. GridServer is Java based so the best practices with regards to Java and Low Latency Workloads on VMware vSphere apply. If you weren’t already aware there is a low latency setting in the VMware vSphere 5.1 Web Client available for those workloads that need low latency.

Mellanox

I went past the Mellanox stand to find out what the latest was from their product lines. I was mainly interested in their Ethernet products. Previously their 10G NIC’s had been quite hard to configure and get working on VMware vSphere and they would show up as phantom NIC’s and sometimes not be available immediately at boot time. This problem has been fixed with the latest firmware and drivers I’ve been told. It was very interesting to see that Mellanox is providing 40GbE and 56GbE card, which includes support for VMware. The 40GbE NIC’s and Switches have a latency of 220ns, compared with 270ns for 10GbE ports.

The Mellanox SX1018 40GbE C Class Blade Switch, which provides 220 ns latency, supports 18 full speed 40GbE ports out of the C Class Chassis. That’s 720Gb/s of low latency bandwidth from a single chasis. I didn’t go into the costs but I’m sure it’s not going to be all that cheap, but certainly impressive from a performance point of view.

Based on what I discussed with Mellanox at their stand I think converged network / storage fabrics are the way of the future with clear leadership in Ethernet over straight FC. When it comes to networking history has shown that Ethernet always wins. With low latency and non-blocking QoS available on high speed Ethernet ports there really is no reason to have traditional FC fabrics and switches if you’re making new investments. 40GbE is here now and 100GbE will probably be available before the end of 2013, at which point 40GbE will start to come down in price.

You can forget about running 40GbE on a Gen 2 PCIe slot. With these sorts of speeds you’re going to have to be running PCIe v3 to get able to get the bandwidth, like you get on the HP Gen 8 servers. With all the advances in networking that are going to be upon us shortly it’s certainly going to make things interesting in the datacenter.

VMware

VMware had a great stand at HP Discover covering Software Defined Datacenter and End User Computing. They were also represented well on various HP stands and other partner stands around the event. Here is a photo of the VMware stand.

Final Word

HP Discover was a great event and I highly recommend it to any current or potential future HP customer. If you can’t make the USA event HP discover is also on in Europe. This year it’s in Barcelona in December. Apart from all the great technology on display at HP Discover one of the highlights was the HP Discover Party on Wednesday night. HP hired out the MGM Grand Arena and had Los Lonely Boys and Santana entertain the conference attendees. This was one awesome show and the entire crowd really enjoyed it. They played all the crowd favourites and HP put on plenty to eat and drink as well. This is one of the best, if not the best, vendor sponsored parties that I’ve ever been to.

I and the other bloggers were also fortunate to get to spend some time with the HP CEO Meg Whitman. Here is a photo of all of us together.

Note: Travel to HP Discover 2013 was paid for by HP; however, no monetary compensation is expected nor received for the content that is written in this blog.

—

This post first appeared on the Long White Virtual Clouds blog at longwhiteclouds.com, by Michael Webster +. Copyright © 2013 – IT Solutions 2000 Ltd and Michael Webster +. All rights reserved. Not to be reproduced for commercial purposes without written permission.